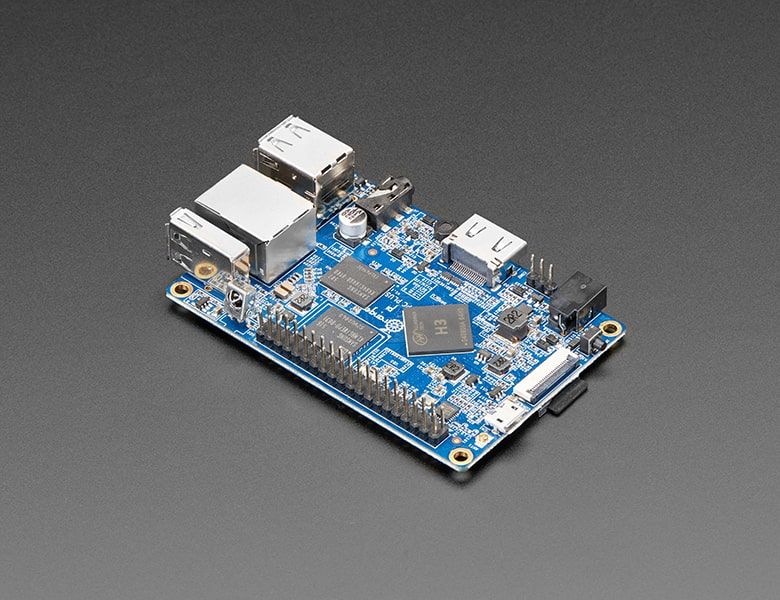

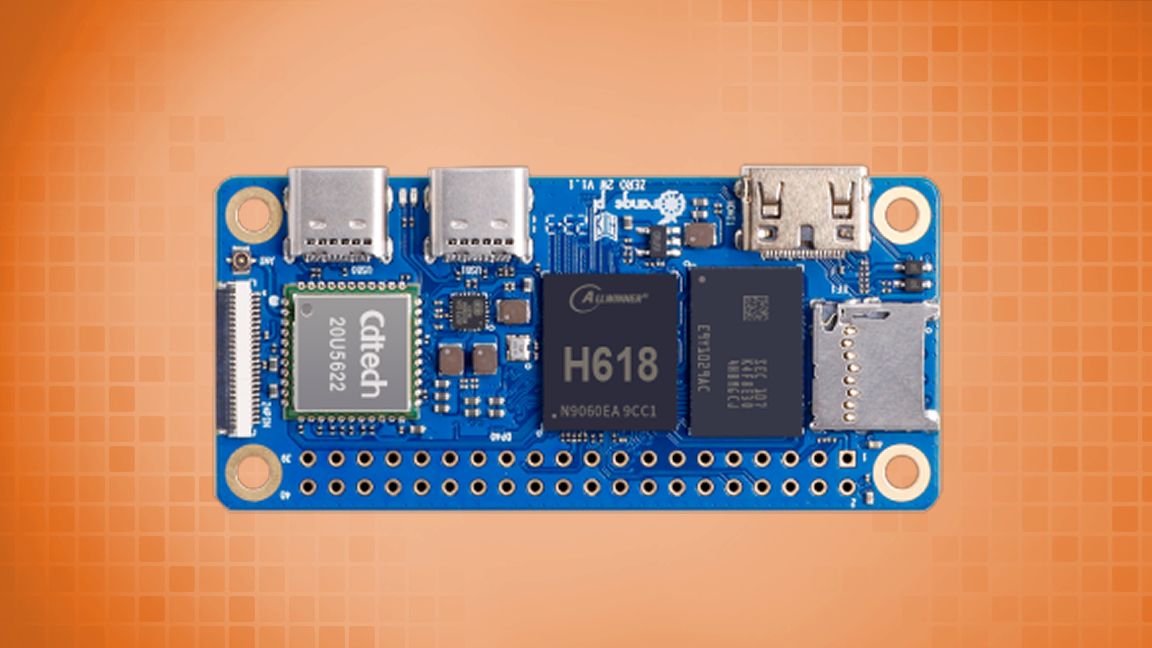

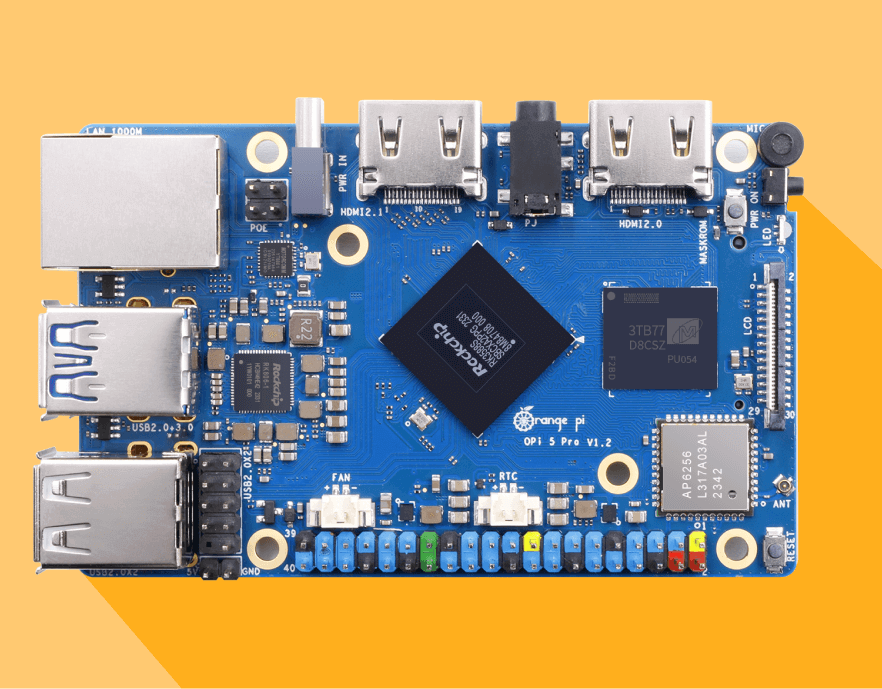

MLC GPU-Accelerated LLM on a $100 Orange Pi

4.8

(296)

Écrire un avis

Plus

€ 28.50

En Stock

Description

MLC Making AMD GPUs competitive for LLM inference

PowerInfer: 11x Speed up LLaMA II Inference On a Local GPU

XGBoost (@XGBoostProject) / X

Junru Shao on LinkedIn: #pytorch #webgpu

MLC LLM归档- 天地一沙鸥

Junru Shao on LinkedIn: MLC LLM now supports running Vicuña-7b on

Luis Ceze (@luisceze) / X

Bohan Hou (@bohanhou1998) / X

Project] GPU-Accelerated LLM on a $100 Orange Pi : r/MachineLearning

Orange pi5归档- 天地一沙鸥

ScaleLLM: Unlocking Llama2-13B LLM Inference on Consumer GPU RTX

Tu pourrais aussi aimer